Publications

2026

- Coupled Particle Filters for Robust Affordance EstimationPatrick Lowing, Vito Mengers, and Oliver BrockIn International Conference on Robotics and Automation (ICRA), 2026

Robotic affordance estimation is challenging due to visual, geometric, and semantic ambiguities in sensory input. We propose a method that disambiguates these signals using two coupled recursive estimators for sub-aspects of affordances: graspable and movable regions. Each estimator encodes property-specific regularities to reduce uncertainty, while their coupling enables bidirectional information exchange that focuses attention on regions where both agree, i.e., affordances. Evaluated on a real-world dataset, our method outperforms three recent affordance estimators (Where2Act, Hands-as-Probes, and HRP) by 308%, 245%, and 257% in precision, and remains robust under challenging conditions such as low light or cluttered environments. Furthermore, our method achieves a 70% success rate in our real-world evaluation. These results demonstrate that coupling complementary estimators yields precise, robust, and embodiment-appropriate affordance predictions.

@inproceedings{lowin2026, author = {Lowing, Patrick and Mengers, Vito and Brock, Oliver}, title = {Coupled Particle Filters for Robust Affordance Estimation}, year = {2026}, booktitle = {International Conference on Robotics and Automation (ICRA)}, } - When Gradients Are Enough: Non-Stationary Potential Fields for Reactive ControlVito Mengers and Oliver BrockIn German Robotics Conference (GRC), 2026

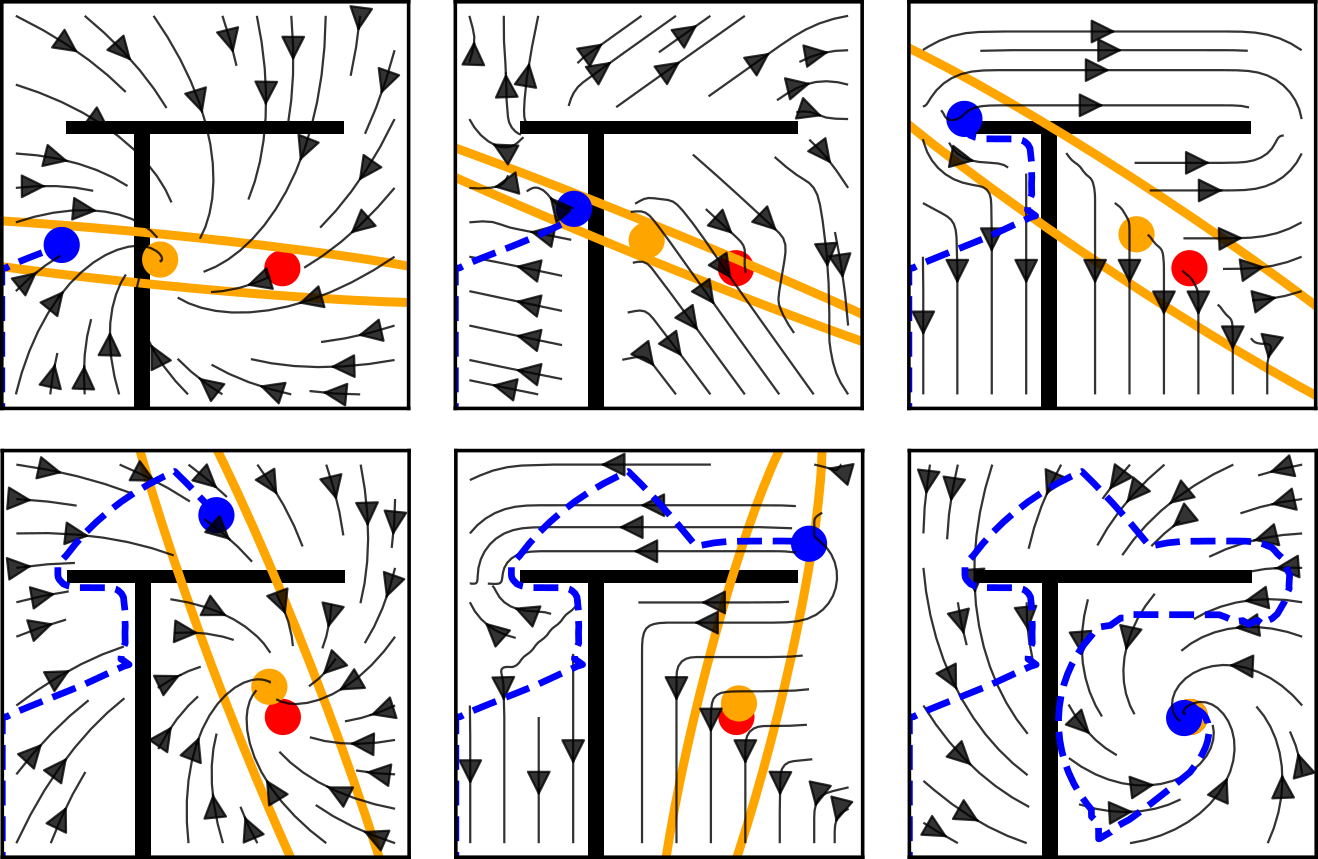

Reactive control based on gradient descent in fixed potential fields is attractive for its robustness and simplicity, but it is fundamentally limited by local minima in complex tasks. This extended abstract presents a general framework for reactive control in which the potential field is made non-stationary in structured ways, allowing a single controller to resolve sequential objectives, multi-objective trade-offs, and exploration without explicit planning or discrete mode switching.

@inproceedings{mengersGRC26, author = {Mengers, Vito and Brock, Oliver}, title = {When Gradients Are Enough: Non-Stationary Potential Fields for Reactive Control}, year = {2026}, booktitle = {German Robotics Conference (GRC)}, }

2025

- A robotics-inspired scanpath model reveals the importance of uncertainty and semantic object cues for gaze guidance in dynamic scenesVito Mengers*, Nicolas Roth*, Oliver Brock**, Klaus Obermayer**, and Martin Rolfs**Journal of Vision, 2025

The objects we perceive guide our eye movements when observing real-world dynamic scenes. Yet, gaze shifts and selective attention are critical for perceiving details and refining object boundaries. Object segmentation and gaze behavior are, however, typically treated as two independent processes. Here, we present a computational model that simulates these processes in an interconnected manner and allows for hypothesis-driven investigations of distinct attentional mechanisms. Drawing on an information processing pattern from robotics, we use a Bayesian filter to recursively segment the scene, which also provides an uncertainty estimate for the object boundaries that we use to guide active scene exploration. We demonstrate that this model closely resembles observers’ free viewing behavior on a dataset of dynamic real-world scenes, measured by scanpath statistics, including foveation duration and saccade amplitude distributions used for parameter fitting and higher-level statistics not used for fitting. These include how object detections, inspections, and returns are balanced and a delay of returning saccades without an explicit implementation of such temporal inhibition of return. Extensive simulations and ablation studies show that uncertainty promotes balanced exploration and that semantic object cues are crucial to forming the perceptual units used in object-based attention. Moreover, we show how our model’s modular design allows for extensions, such as incorporating saccadic momentum or presaccadic attention, to further align its output with human scanpaths.

@article{mengers2025robotics, title = {A robotics-inspired scanpath model reveals the importance of uncertainty and semantic object cues for gaze guidance in dynamic scenes}, author = {Mengers, Vito and Roth, Nicolas and Brock, Oliver and Obermayer, Klaus and Rolfs, Martin}, journal = {Journal of Vision}, volume = {25}, number = {2}, year = {2025}, publisher = {The Association for Research in Vision and Ophthalmology}, } - No Plan but Everything Under Control: Robustly Solving Sequential Tasks with Dynamically Composed Gradient DescentVito Mengers and Oliver BrockIn International Conference on Robotics and Automation (ICRA), 2025

ICRA Best Paper in Planning and Control

“For challenging the planning paradigm by introducing a feedback-driven method that solves sequential tasks through continuous adaptation.”

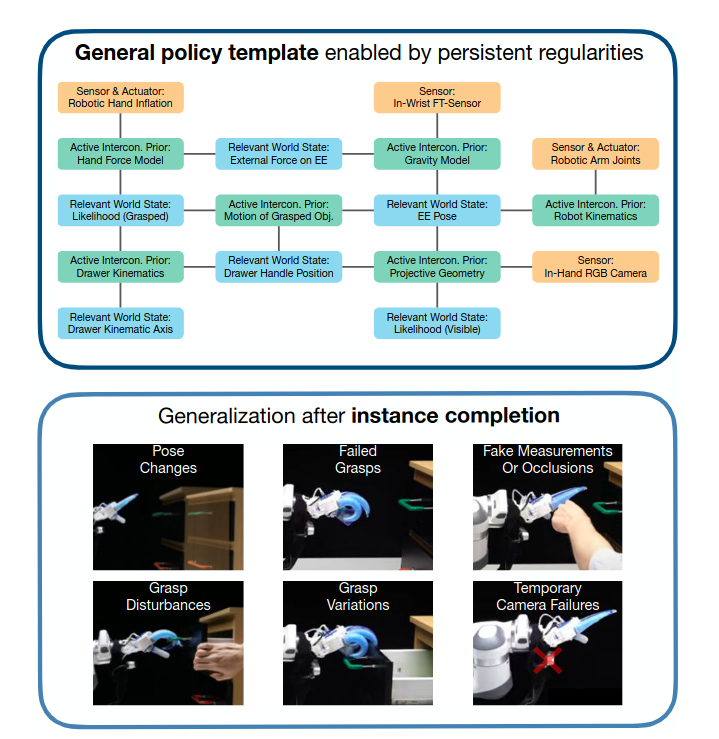

Also finalist for the Best Paper (Overall), Best Student Paper, and Best Paper in Robot Learning.We introduce a novel gradient-based approach for solving sequential tasks by dynamically adjusting the underlying myopic potential field in response to feedback and the world’s regularities. This adjustment implicitly considers subgoals encoded in these regularities, enabling the solution of long sequential tasks, as demonstrated by solving the traditional planning domain of Blocks World–without any planning. Unlike conventional planning methods, our feedback-driven approach adapts to uncertain and dynamic environments, as demonstrated by one hundred real-world trials involving drawer manipulation. These experiments highlight the robustness of our method compared to planning and show how interactive perception and error recovery naturally emerge from gradient descent without explicitly implementing them. This offers a computationally efficient alternative to planning for a variety of sequential tasks, while aligning with observations on biological problem-solving strategies.

@inproceedings{mengers2025no, title = {No Plan but Everything Under Control: Robustly Solving Sequential Tasks with Dynamically Composed Gradient Descent}, author = {Mengers, Vito and Brock, Oliver}, booktitle = {International Conference on Robotics and Automation (ICRA)}, pages = {90--96}, year = {2025}, organization = {IEEE}, url = {https://rbo.gitlab-pages.tu-berlin.de/papers/mengers-icra-25/}, } - A Helping (Human) Hand in Kinematic Structure EstimationAdrian Pfisterer*, Xing Li*, Vito Mengers, and Oliver BrockIn International Conference on Robotics and Automation (ICRA), 2025

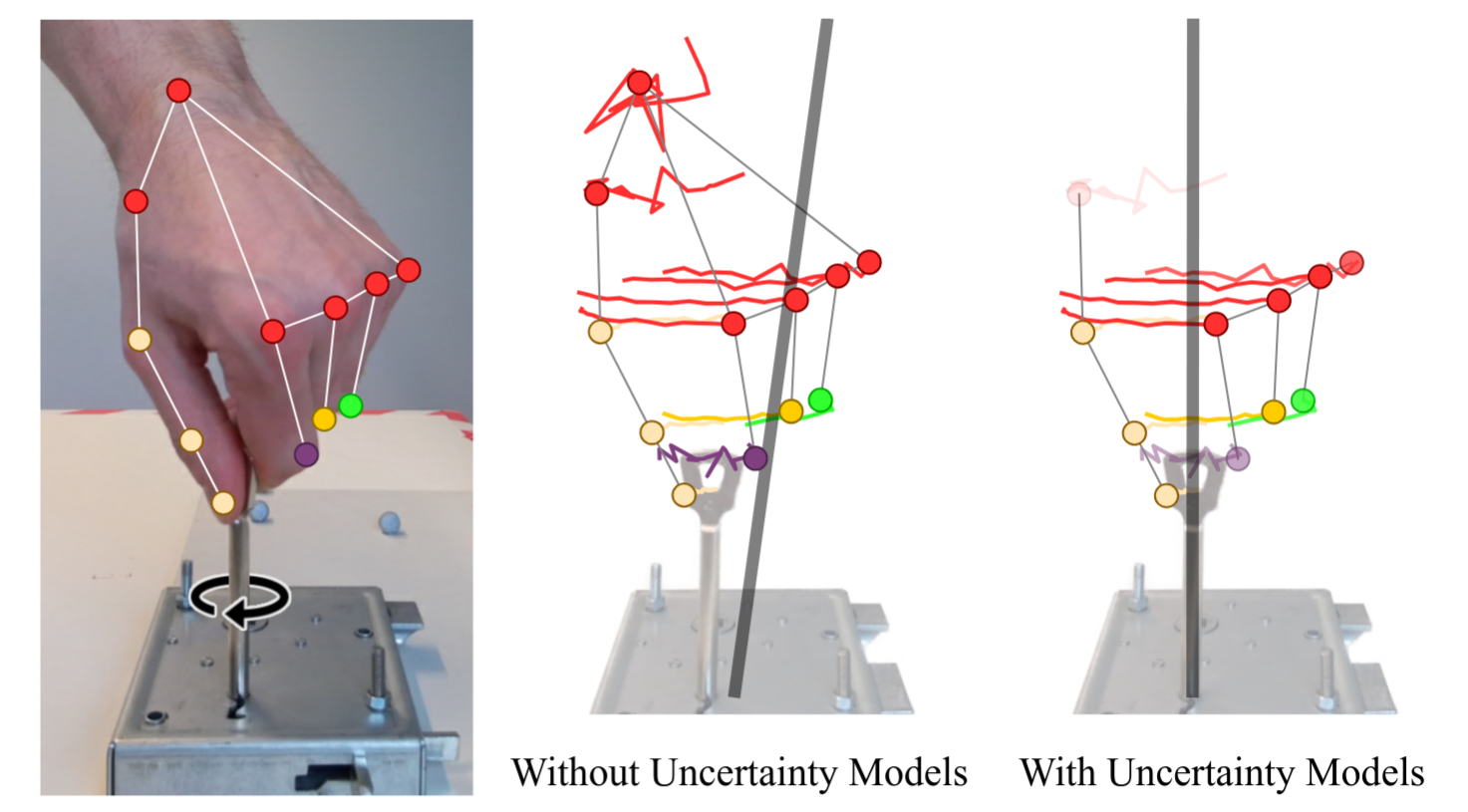

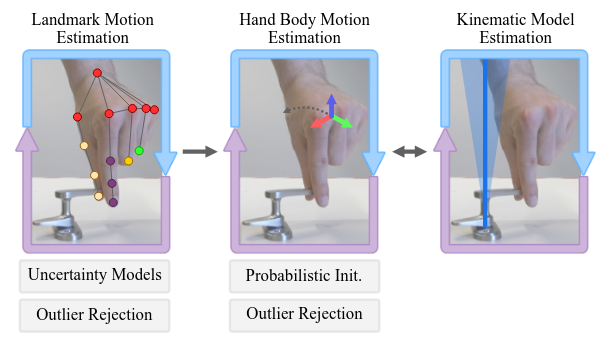

Visual uncertainties such as occlusions, lack of texture, and noise present significant challenges in obtaining accurate kinematic models for safe robotic manipulation. We introduce a probabilistic real-time approach that leverages the human hand as a prior to mitigate these uncertainties. By tracking the constrained motion of the human hand during manipulation and explicitly modeling uncertainties in visual observations, our method reliably estimates an object’s kinematic model online. We validate our approach on a novel dataset featuring challenging objects that are occluded during manipulation and offer limited articulations for perception. The results demonstrate that by incorporating an appropriate prior and explicitly accounting for uncertainties, our method produces accurate estimates, outperforming two recent baselines by 195 % and 140 %, respectively. Furthermore, we demonstrate that our approach’s estimates are precise enough to allow a robot to manipulate even small objects safely.

@inproceedings{pfisterer2025helping, title = {A Helping (Human) Hand in Kinematic Structure Estimation}, author = {Pfisterer, Adrian and Li, Xing and Mengers, Vito and Brock, Oliver}, booktitle = {International Conference on Robotics and Automation (ICRA)}, pages = {11918-11925}, year = {2025}, organization = {IEEE}, } - Stop Merging, Start Separating: Why Merging Learning and Modeling Won’t Solve Manipulation but Separating the General From the Specific WillVito Mengers*, Alexander Koenig*, Xing Li*, Adrian Sieler, Aravind Battaje, and Oliver BrockIn ICRA Workshop: Learning Meets Model-Based Methods for Contact-Rich Manipulation, 2025

Recent progress in robot manipulation can be attributed to two developments: first, the application of novel learning methods, and second, the use of expertly crafted models. Consequently, merging these two developments seems a promising path for further progress. However, this only works if obtaining policies from learning and modeling possess synergistic properties. We argue that this is not necessarily the case. We discuss the reasons and suggest an alternative view of what can accelerate progress in manipulation. We then recall that this alternative view is already well-established in seminal works in robotics and show, based on our own work, that this view continues to produce advances in robotic manipulation.

@inproceedings{mengers2025stop, title = {Stop Merging, Start Separating: Why Merging Learning and Modeling Won’t Solve Manipulation but Separating the General From the Specific Will}, author = {Mengers, Vito and Koenig, Alexander and Li, Xing and Sieler, Adrian and Battaje, Aravind and Brock, Oliver}, booktitle = {ICRA Workshop: Learning Meets Model-Based Methods for Contact-Rich Manipulation}, year = {2025}, } - AICON: A Representation for Adaptive BehaviorVito Mengers*, Aravind Battaje*, and Oliver BrockIn German Robotics Conference (GRC), 2025

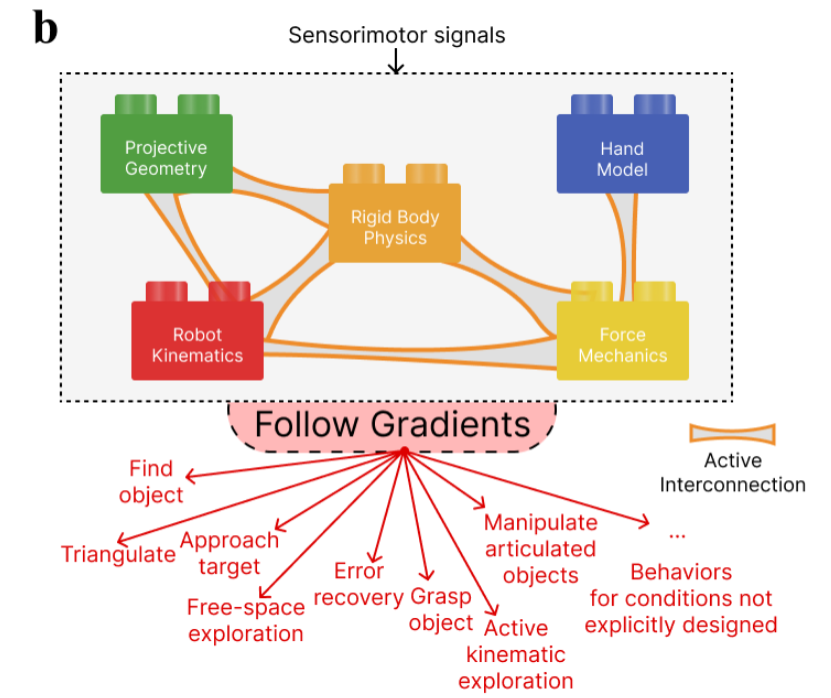

Robot behavior needs to be robust. To achieve robustness, traditional approaches attempt to anticipate all possible situations the robot could encounter and account for them in a policy. But how do we represent the behavior so that it also remains robust in unanticipated situations? Here, we present AICON (Active InterCONnect), a framework that generates behavior without directly encoding it. Instead, we dynamically compose multiple sensorimotor regularities to simultaneously estimate relevant states and obtain action gradients. Using these gradients, we can generate robust robotic behavior for real-world tasks even for unanticipated scenarios and large disturbances. We also show that AICON can be used to study biology as it possesses characteristics similar to biological information processing.

@inproceedings{mengersbattajeGRC25, author = {Mengers, Vito and Battaje, Aravind and Brock, Oliver}, title = {AICON: A Representation for Adaptive Behavior}, year = {2025}, booktitle = {German Robotics Conference (GRC)}, } - A Handy Solution to Kinematic Structure EstimationAdrian Pfisterer*, Xing Li*, Vito Mengers, and Oliver BrockIn German Robotics Conference (GRC), 2025

Programming by Demonstration enables intuitive robot learning but suffers from a critical limitation: during human demonstrations, the object and its motion are often occluded, especially for small objects that require precise manipulation models. We address this challenge by inferring object kinematics directly from human hand motion, leveraging the insight that the hand’s movement is partially constrained by the manipulated object. By using hand motion as a prior and incorporating uncertainty models grounded in hand properties to reject outliers and quantify confidence, our approach achieves accurate online estimation even under severe occlusion and noisy, small-scale motions. We validate the method on a newly introduced benchmark dataset of ten small objects—including challenging examples such as keys and sliding locks—where it outperforms recent baselines by 195% and 140%, and we demonstrate robust real-world robotic manipulation from a single human demonstration.

@inproceedings{pfistererGRC2025, author = {Pfisterer, Adrian and Li, Xing and Mengers, Vito and Brock, Oliver}, title = {A Handy Solution to Kinematic Structure Estimation}, year = {2025}, booktitle = {German Robotics Conference (GRC)}, }

2024

- Leveraging uncertainty in collective opinion dynamics with heterogeneityVito Mengers*, Mohsen Raoufi*, Oliver Brock**, Heiko Hamann**, and Pawel Romanczuk**Scientific Reports, 2024

Natural and artificial collectives exhibit heterogeneities across different dimensions, contributing to the complexity of their behavior. We investigate the effect of two such heterogeneities on collective opinion dynamics: heterogeneity of the quality of agents’ prior information and of degree centrality in the network. To study these heterogeneities, we introduce uncertainty as an additional dimension to the consensus opinion dynamics model, and consider a spectrum of heterogeneous networks with varying centrality. By quantifying and updating the uncertainty using Bayesian inference, we provide a mechanism for each agent to adaptively weigh their individual against social information. We observe that uncertainties develop throughout the interaction between agents, and capture information on heterogeneities. Therefore, we use uncertainty as an additional observable and show the bidirectional relation between centrality and information quality. In extensive simulations on heterogeneous opinion dynamics with Gaussian uncertainties, we demonstrate that uncertainty-driven adaptive weighting leads to increased accuracy and speed of consensus, especially with increasing heterogeneity. We also show the detrimental effect of overconfident central agents on consensus accuracy which can pose challenges in designing such systems. The opportunities for improved performance and observablility suggest the importance of considering uncertainty both for the study of natural and the design of artificial heterogeneous systems.

@article{mengers2024leveraging, title = {Leveraging uncertainty in collective opinion dynamics with heterogeneity}, author = {Mengers, Vito and Raoufi, Mohsen and Brock, Oliver and Hamann, Heiko and Romanczuk, Pawel}, journal = {Scientific Reports}, volume = {14}, number = {1}, pages = {27314}, year = {2024}, publisher = {Nature Publishing Group UK London}, }

2023

- Combining Motion and Appearance for Robust Probabilistic Object Segmentation in Real TimeVito Mengers, Aravind Battaje, Manuel Baum, and Oliver BrockIn International Conference on Robotics and Automation (ICRA), 2023

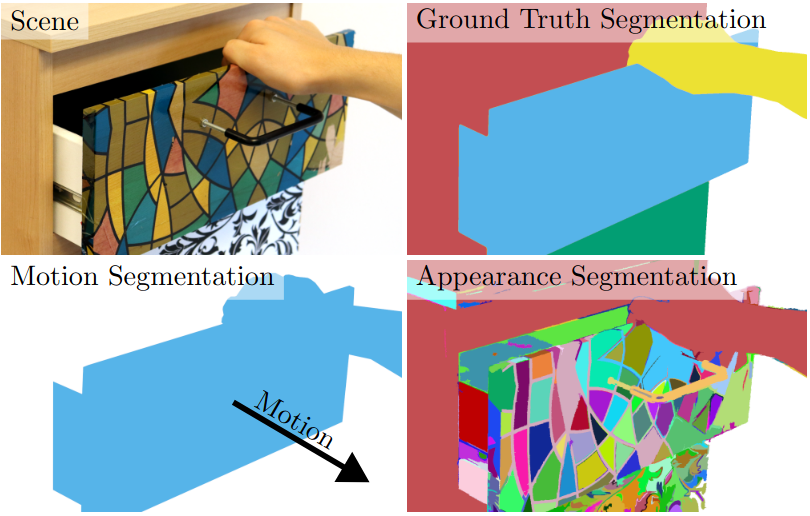

We present a robust method to visually segment scenes into objects based on motion and appearance. Both these cues provide complementary information that we fuse using two interconnected recursive estimators: One estimates object segmentation from motion as a probabilistic clustering of tracked 3D points, and the other estimates object segmentation from appearance as a probabilistic image segmentation. The interconnected estimators provide a probabilistic and consistent object segmentation in real time, which makes them well suited for many downstream robotic tasks. We evaluate our method on one such task, kinematic structure estimation, on a dataset of interactions with articulated objects and show that our fusion improves object segmentation by 70% and in turn estimated kinematic joints by 26% over a purely motion-based approach. Furthermore, we show the necessity of probabilistic modeling for downstream robotic tasks, achieving 339% of the performance of a recent multimodal but deterministic RNN for object segmentation on the estimation of kinematic structure.

@inproceedings{mengers2023combining, title = {Combining Motion and Appearance for Robust Probabilistic Object Segmentation in Real Time}, author = {Mengers, Vito and Battaje, Aravind and Baum, Manuel and Brock, Oliver}, booktitle = {International Conference on Robotics and Automation (ICRA)}, pages = {683--689}, year = {2023}, organization = {IEEE}, }